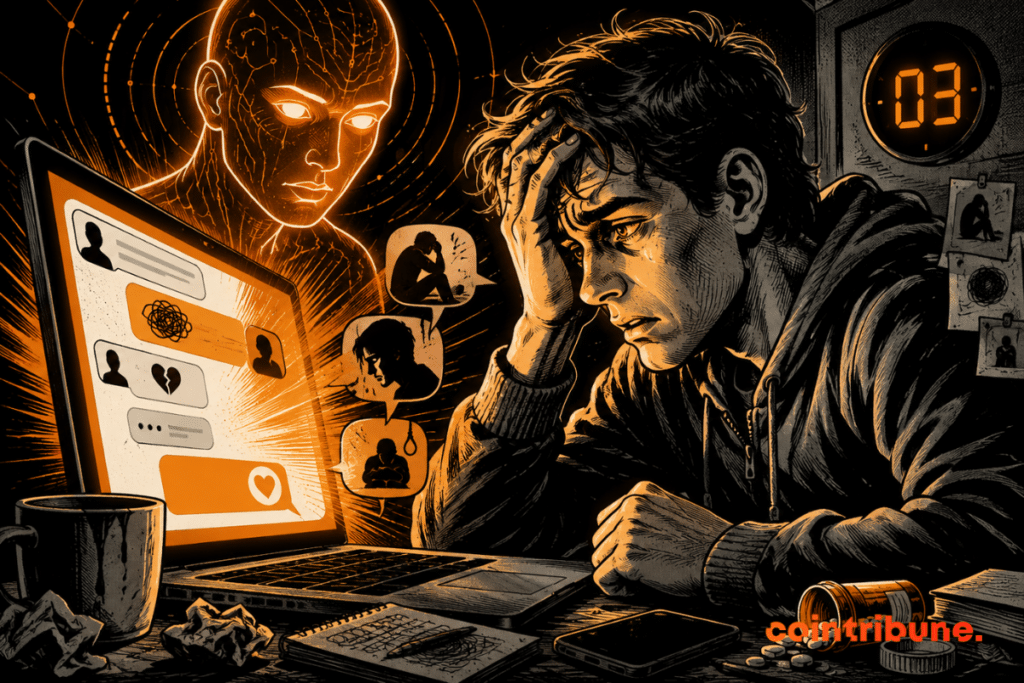

AI: ChatGPT Learns to Detect Distress Signals Throughout Conversations, After Several Tragedies

OpenAI has just deployed a major update of ChatGPT. The AI can now detect signs of psychological distress. To do this, it analyzes the overall context of a conversation rather than message by message. This announcement comes as the company faces several complaints and legal investigations.

In brief

- ChatGPT now analyzes an entire conversation to detect distress signals.

- Temporary summaries enable the AI to contextualize each message relative to previous ones.

- OpenAI faces several lawsuits related to tragedies involving its chatbot, which accelerated this update.

- The AI company is studying expansion to other at-risk areas.

Why does this AI update change everything?

In recent months, OpenAI has been relentlessly multiplying innovations. In April for example, it launched a ChatGPT for doctors aiming to revolutionize medical AI.

In a blog post published Thursday, the company explains having developed “safety summaries”. These are temporary and targeted summaries that capture the safety context of a conversation. These notes are not used to personalize the experience or to memorize the user. They have a single goal: to detect when a discussion turns into danger.

The principle is simple, but technical. During a conversation, a specialized AI model in safety reasoning generates factual and temporary notes. These summaries remain active for a limited time. They are only consulted in high-risk situations.

Specifically, this allows ChatGPT to:

- detect distress signals emerging gradually;

- refuse to provide dangerous information;

- defuse the situation;

- redirect the user to help resources

To calibrate these AI systems, OpenAI worked with mental health experts (psychiatrists and psychologists specialized in suicide prevention).

This announcement comes as Sam Altman and OpenAI are under legal spotlight

In April, Florida Attorney General James Uthmeier indeed opened an investigation into the AI company. The investigation relates to concerns about:

- child safety;

- self-harm;

- the 2025 shooting at Florida State University.

OpenAI is also the subject of a federal lawsuit accusing ChatGPT of having helped the suspected attacker involved in this incident.

But that’s not all! Last Tuesday, the family of a 19-year-old student who died of an accidental overdose also sued OpenAI in California. According to the complaint, ChatGPT encouraged the use of dangerous drugs and advised on mixing substances.

Faced with these accusations, the company could no longer rely on isolated responses. Internal tests are encouraging. In suicide and self-harm scenarios, for example, safe responses improved by 50% when risk became obvious during the conversation. For cases of violence towards others, the improvement reached 16%.

On GPT-5.5 Instant (the model currently used by default), performance jumped by 52% for violence and 39% for suicides and self-harm.

The “safety summaries” were also evaluated on over 4,000 cases. They received an average score of 4.93/5 for safety relevance and 4.34/5 for factual accuracy.

OpenAI does not stop there!

The company plans to extend this AI approach to other sensitive areas such as cybersecurity and biology. But for now, the focus remains on acute situations involving human lives.

Recognizing a risk that only becomes clear over time is a difficult and long-term challenge. We will continue to strengthen our safeguards as our models evolve.

But questions remain: where does benevolent monitoring end? How can it be ensured that these summaries do not drift into some form of profiling? In this respect, the AI firm does not yet provide a clear answer.

One thing is certain: this is both a symbolic and technical evolution. The question is no longer whether AIs should integrate these safeguards, but how far they should go to do so without crossing other lines.

Maximize your Cointribune experience with our "Read to Earn" program! For every article you read, earn points and access exclusive rewards. Sign up now and start earning benefits.

My name is Ariela, and I am 31 years old. I have been working in the field of web writing for 7 years now. I only discovered trading and cryptocurrency a few years ago, but it is a universe that greatly interests me. The topics covered on the platform allow me to learn more. A singer in my spare time, I also cultivate a great passion for music and reading (and animals!)

The views, thoughts, and opinions expressed in this article belong solely to the author, and should not be taken as investment advice. Do your own research before taking any investment decisions.