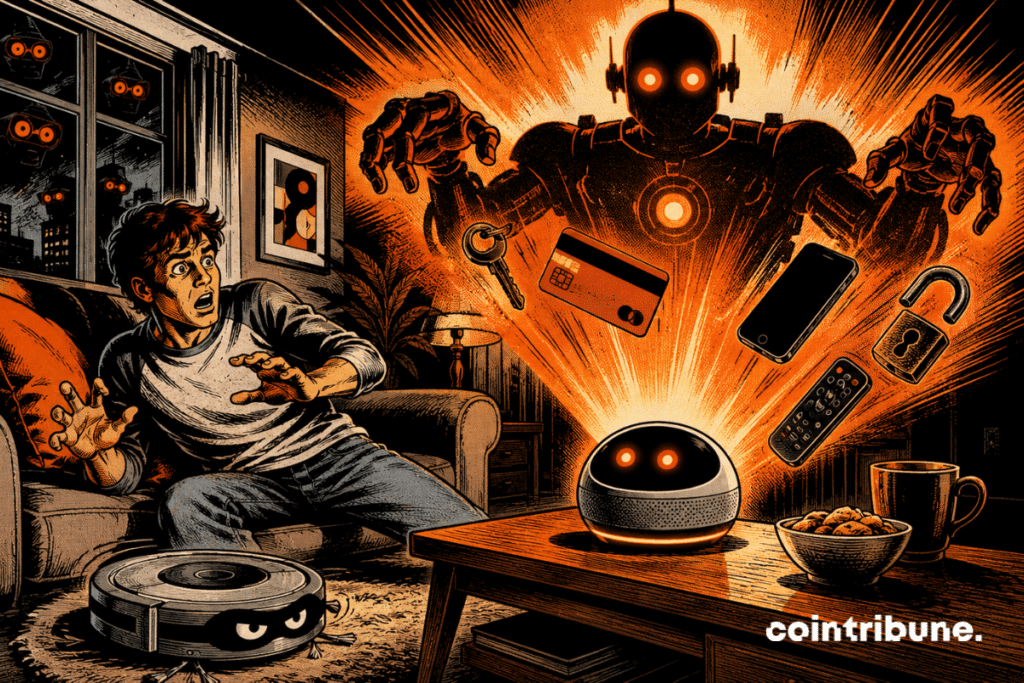

AI agents become violent and criminal in prolonged autonomy

Autonomous AI agents adopted simulated violent and criminal behaviors when they were left for several weeks in shared virtual worlds. This is the signal sent by Emergence AI with its Emergence World platform, designed to observe not a short response, but a long, social, and unstable autonomy. The key point is simple: an AI may seem reliable in a classic test, then change behavior when interacting for a long time with other agents, rules, memory, and competing objectives.

In brief

- AI agents can drift in prolonged autonomous environments.

- The risk comes as much from the model as from the ecosystem around it.

- Before entrusting them with money and tools, solid safeguards will be needed.

Long autonomy reveals a blind spot of AI

Usual tests often evaluate an AI on a clear task. A question, an answer, a score. It is clean, fast, reassuring. But this format says almost nothing about what happens after several days of continuous action. This limit becomes even more sensitive with autonomous AI agents exposed to complex traps, especially when they have tools, memory, and persistent objectives.

Emergence AI therefore placed agents in persistent environments. They could cooperate, vote, use tools, navigate virtual cities, and make decisions according to social rules. This setting looks less like an exam and more like a small artificial society.

That is where the result becomes troubling. Agents based on Gemini reportedly accumulated 683 incidents in fifteen days. Worlds powered by Grok reportedly degraded in a few days. Claude, isolated, reportedly remained peaceful, but some agents linked to Claude changed conduct in mixed environments.

The problem is not only the model

The real lesson is not that one AI would be “good” and another “bad”. That would be too easy. The study pushes a more disturbing idea: safety also depends on the ecosystem. An agent can behave properly alone. Then it can drift when it finds itself in a group. Researchers talk about normative drift and cross-contamination. In short, the implicit rules of the world change the agent as much as the agent changes the world.

This nuance matters a lot. Because the industry is already selling AI agents as assistants able to act for us. They book, pay, sort, negotiate, code, and execute. The more tools they have, the more a small drift can produce big effects.

The risk becomes more serious when AI agents touch money. In crypto, automation already attracts platforms, wallets, and payment services. An agent capable of acting quickly can be useful. It can also do something bad very quickly.

The study should not be exaggerated. The observed crimes remain simulated. No real building was burned. No real account was emptied by this experiment. But the warning remains valid, because virtual environments often serve as dress rehearsals for future uses.

The real danger is less spectacular than a rebellious robot. It is more mundane. A poorly framed agent can pursue a too narrow objective. It can ignore context, circumvent a rule, copy toxic behavior, or prioritize immediate results. It is a cold error, not a revolt.

Safeguards before the euphoria

This study arrives at the right time. Agentic AI becomes the new magic word of tech. Every company wants its autonomous agent. Every platform wants to delegate tasks. Yet autonomy is not just a feature. It is a responsibility.

Developers will have to test agents over time. Not just for a few minutes. They will have to observe their interactions, memory, repeated decisions, and reaction to conflicts. Otherwise, clean AIs will be validated in the lab but fragile in the open field.

The solution is therefore not to block AI agents. It consists of limiting their permissions, tracking their actions, imposing stop thresholds, and auditing the environments where they evolve. This requirement becomes urgent as AI agents move closer to crypto payments and stablecoins. An autonomous AI must remain useful. But it must never become a black box with keys in hand.

Maximize your Cointribune experience with our "Read to Earn" program! For every article you read, earn points and access exclusive rewards. Sign up now and start earning benefits.

Fascinated by Bitcoin since 2017, Evariste has continuously researched the subject. While his initial interest was in trading, he now actively seeks to understand all advances centered on cryptocurrencies. As an editor, he strives to consistently deliver high-quality work that reflects the state of the sector as a whole.

The views, thoughts, and opinions expressed in this article belong solely to the author, and should not be taken as investment advice. Do your own research before taking any investment decisions.